Publications Index by ISI

An overview of the Institute for Systems Integrity’s published work, highlighting foundational papers and thematic groupings.

This section brings together our published work — essays, position pieces, and research-informed commentary — focused on system integrity, governance, and decision-making in complex environments.

Our publications examine how systems behave under pressure: where incentives misalign, where accountability fragments, and where design choices shape real-world outcomes in health, technology, and public institutions.

© 2026 Institute for Systems Integrity. All rights reserved.

Content may be quoted or referenced with attribution.

Commercial reproduction requires written permission.

Editorial commitments:

Clarity over volume

We publish selectively, prioritising substance, evidence, and coherence over frequency.

Practice-informed insight

Our work is grounded in lived experience — connecting frontline realities with board-level, regulatory, and policy contexts.

Respect for complexity

We resist oversimplification. Many of the challenges facing institutions today cannot be reduced to slogans or single-factor explanations.

Foundations Article #1

Anchor papers that establish the Institute’s core lenses and conceptual frameworks

Latest Publication:

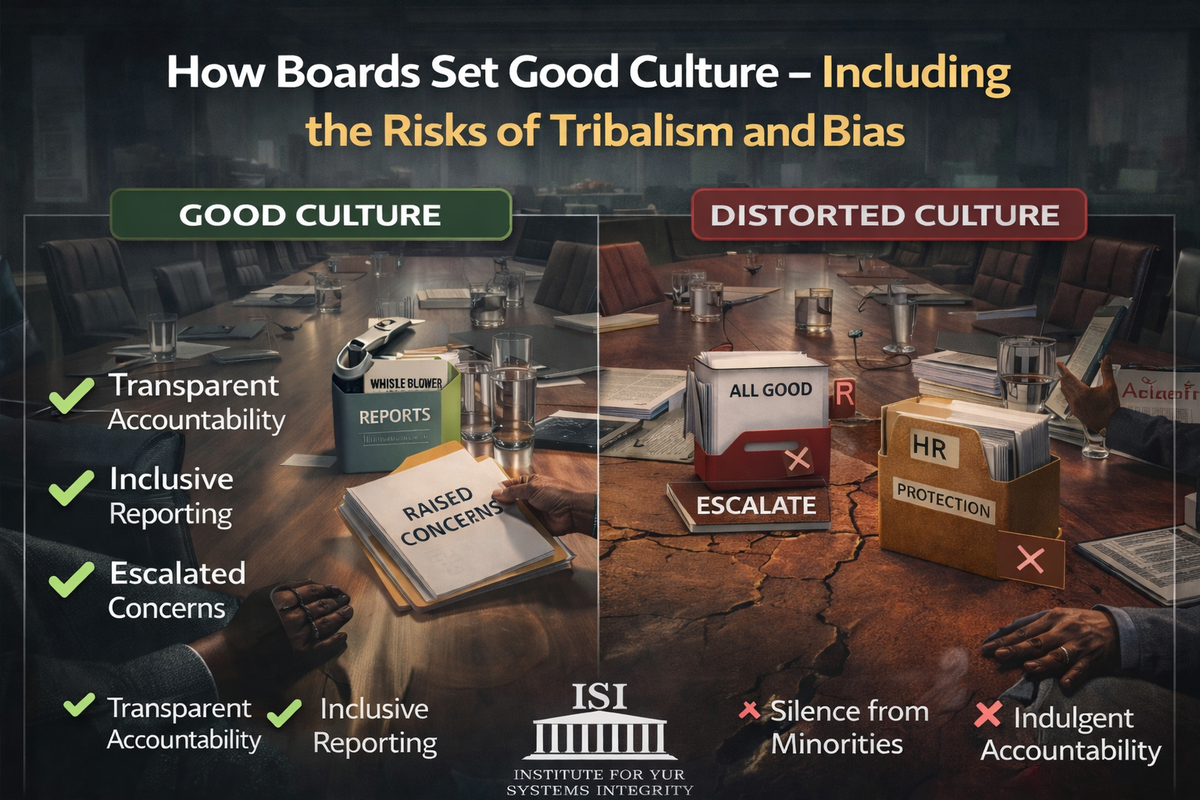

How Boards Set Good Culture — Including the Risks of Tribalism and Bias : Culture as a control system for truth, behaviour, and risk

Culture is often treated as a soft organisational concept. This article reframes culture as a governance control system — one that determines whether truth moves, risk is surfaced, and behaviour aligns with intent. It examines how boards shape culture through five governance levers, and why tribalism, hierarchy and bias can distort the signals boards rely on.

From Human-in-the-Loop to Human-with-Agency : Why AI Oversight Fails When Humans Are Present but Powerless

Artificial intelligence is rapidly entering healthcare, governance, operations, and critical infrastructure. Yet many organisations still mistake human presence for meaningful oversight. In this paper, the Institute for Systems Integrity introduces the Human Agency Framework — a governance model examining the difference between symbolic human involvement and protected human judgement under pressure. The paper argues that AI oversight fails not when humans are absent, but when they are present without the authority, information, or organisational support to intervene when it matters most.

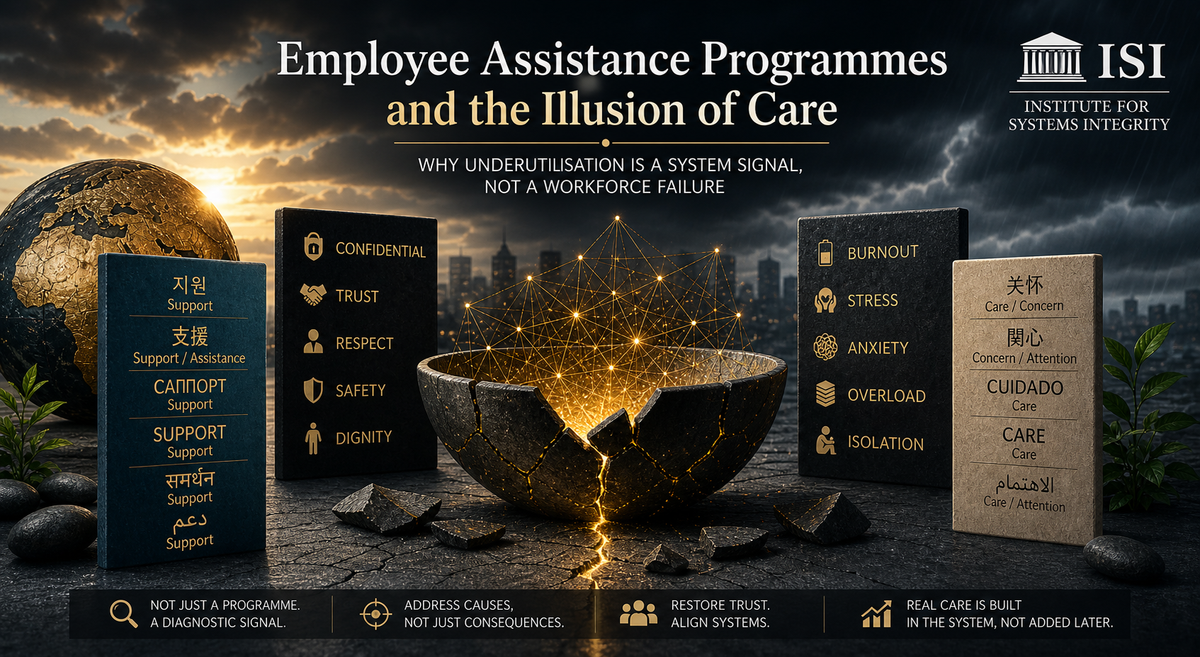

Employee Assistance Programmes and the Illusion of Care : Why Underutilisation Is a System Signal, Not a Workforce Failure

Employee Assistance Programmes are often positioned as evidence of organisational care. Yet across industries, utilisation remains consistently low. This paper challenges the assumption that underuse reflects employee reluctance, arguing instead that it signals a deeper systems issue. When organisations address distress downstream—through support services—while leaving upstream drivers such as workload, culture, and leadership unchanged, a gap emerges between stated intent and lived experience. This paper reframes EAPs not as solutions, but as diagnostic indicators of system strain, and explores the governance implications of mistaking availability of support for presence of care.

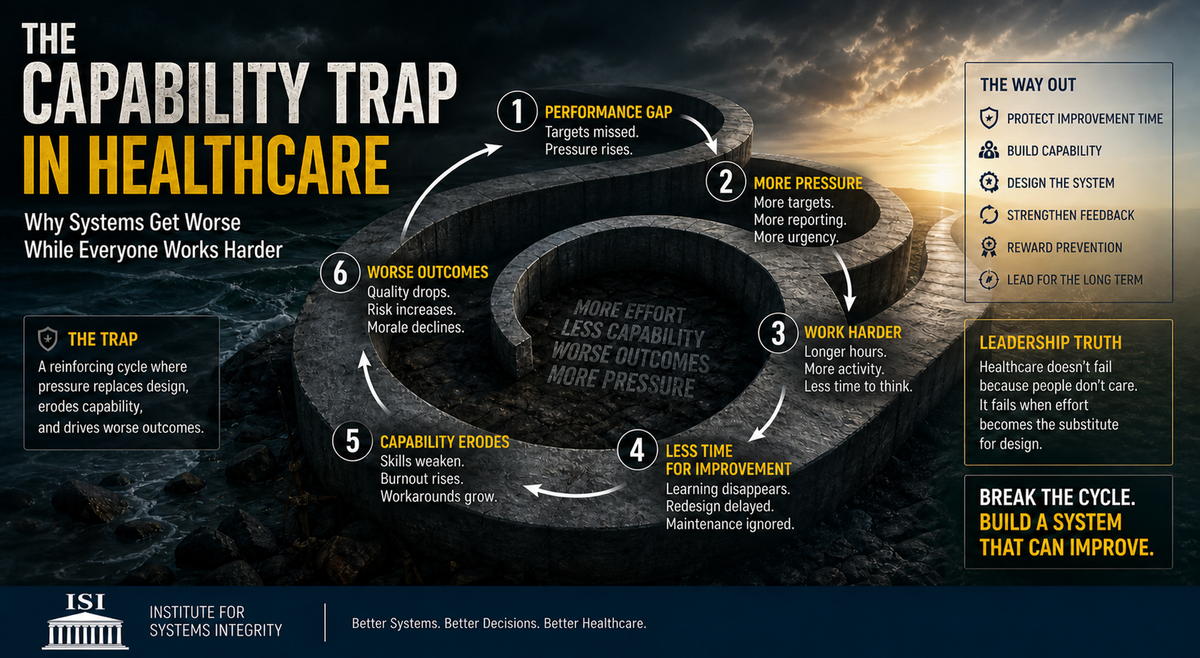

The Capability Trap in Healthcare Why Systems Get Worse While Everyone Works Harder

Healthcare systems rarely fail from a lack of effort. More often, they fail because effort is used to compensate for flawed system design. In The Capability Trap in Healthcare, the Institute for Systems Integrity (ISI) examines how organisations respond to performance pressure by increasing workload, targets, and urgency—while unintentionally eroding the very capability required to improve. This paper reframes the capability trap as a governance risk: a reinforcing loop where short-term performance gains come at the cost of long-term system strength, learning, and resilience. For boards and leaders, the challenge is not to drive more effort, but to recognise when effort has become a substitute for design—and to intervene before decline is locked in.

From Pitch Deck to Reality: Where Startup Systems Break When Growth Begins

Startups are often judged by the strength of their ideas. In practice, they succeed or fail based on the strength of their systems. This paper examines a critical transition point in venture development—the shift from narrative to execution—where assumptions are tested against operational reality. Drawing on governance, entrepreneurship, and real-world scaling dynamics, the Institute for Systems Integrity (ISI) explores how signal distortion, supply chain constraints, and incentive misalignment emerge as growth accelerates. The result is a clear insight: failure is rarely sudden. It is the cumulative consequence of systems that lose the ability to see and respond to truth. This paper introduces a practical framework for preserving system integrity as startups scale, ensuring that speed is matched with accountability, visibility, and disciplined execution.

When Choice Disappears: Why Healthcare Exposes the Limits of Market Logic

Healthcare is frequently debated as an ideological contest between markets and government, yet the real test comes when people are vulnerable, frightened, and in urgent need of care. In those moments, the normal conditions that make markets function—time, information, bargaining power, and freedom to choose—often disappear. When Choice Disappears: Why Healthcare Exposes the Limits of Market Logic examines why healthcare cannot be understood as an ordinary consumer market, why most serious nations build protective guardrails, and how resilient systems combine innovation, competition, access, and human dignity through smarter governance and design.

From Externalities to Systemic Risk: How Sustainability Entered the Logic of Finance

For decades, environmental and social harms were treated as externalities—costs absorbed by communities, ecosystems, and future generations rather than reflected in markets. That era is ending. Climate disruption, governance failures, supply-chain fragility, labour instability, and transition risk are increasingly entering financial systems through asset pricing, lending decisions, insurance markets, and board oversight. This paper examines one of the defining governance shifts of our time: how sustainability moved from the language of responsibility into the logic of finance

Wearables Are Not Personal Devices: They Are Vulnerable Points Inside Critical Systems

Wearables are commonly viewed as personal devices — watches, trackers, glasses, and sensors used by individuals. But in connected healthcare and enterprise environments, they are something more consequential: vulnerable points inside larger systems. In this new paper, the Institute for Systems Integrity explores how always-on, human-attached devices create governance blind spots, behavioural intelligence risks, and new pathways of system exposure that most organisations have yet to properly recognise.

If the Right Clinician Is Not in the Room, Systems Drift: Clinical Signal, Governance Design, and the Risk of Functional Absence

Healthcare governance often assumes that clinical representation is enough. It isn’t. Many organisations have a clinician at the table, yet still make decisions detached from operational reality. The issue is not presence — it is whether the rightclinician perspective is meaningfully shaping judgement, risk calibration, and strategic choices. When authentic clinical signal is absent, governance does not pause; it continues with weaker visibility, reduced challenge, and rising drift. This article examines how symbolic representation, flawed governance design, and functional clinical absence can quietly erode decision quality across healthcare systems.

The Approval Illusion: Why Boards Must Govern AI as a Living Clinical Risk System — and Why Vendors Must Share the Burden of Harm

Most boards believe that approving artificial intelligence is an act of governance. It is not. It is the point at which risk enters the system. In healthcare, AI does not behave like a static tool but as a dynamic, context-dependent influence on clinical decision-making — capable of drift, degradation, and unintended consequence under real-world conditions. Yet governance models remain anchored in procurement logic, while accountability for failure sits with clinicians at the point of care. This paper examines the “approval illusion” — the structural gap between decision authority and risk exposure — and argues that boards must shift from approving technology to governing decision quality, with vendors sharing responsibility for the clinical risks their systems create.

The Strategy Governance System for AI in Healthcare: Why boards must govern decision quality — not just approve technology

Artificial intelligence is rapidly reshaping healthcare, but its risks are not primarily technical — they are systemic. This paper introduces the Strategy Governance System for AI in Healthcare, reframing governance as a continuous process that extends beyond approval into decision quality, signal integrity, and adaptive oversight. It argues that boards do not manage AI risk by reviewing dashboards or endorsing strategy alone, but by governing how decisions are formed, tested, executed, and monitored over time. In doing so, it highlights a critical shift: from overseeing technology to safeguarding the integrity of the systems in which that technology operates.

Micromanagement as a Governance Failure Mode : Why control concentrates risk instead of reducing it

Micromanagement is often seen as a leadership problem. This paper reframes it as a governance failure mode. When decision-making concentrates at the top, organisations lose capability, slow down under pressure, and become structurally fragile. This ISI paper examines how control, when misapplied, undermines system integrity.

Walking the Floor as a Governance Mechanism

Most governance systems rely on what is reported—dashboards, metrics, and formal updates. But risk does not begin in reports. It begins in conditions: how work is performed, how pressure is managed, and whether concerns are raised or absorbed. This paper examines how “walking the floor” can be understood not as leadership visibility, but as a governance sensing mechanism—one that improves signal integrity, strengthens cultural oversight, and enables earlier detection of system stress before it becomes visible in formal reporting.

Absenteeism in Healthcare: From Workforce Symptom to System Signal

Absenteeism in healthcare is often viewed as a workforce issue requiring operational solutions. This paper reframes it as an early signal of system strain. When examined through a systems integrity lens, patterns of absence reveal deeper pressures in workload design, patient flow, organisational culture and leadership response. By shifting the focus from individual behaviour to system conditions, this analysis highlights why absenteeism matters not only for workforce sustainability, but for the integrity of clinical decision-making and the safety of care delivery.

Access Without Interpretation: Why Australia’s Digital Health Reform Risks Distorting Clinical Decision-Making

Healthcare systems are undergoing a quiet but profound shift. As patients gain faster access to pathology and imaging results — often before clinician review — the traditional flow of clinical decision-making is being reconfigured. What appears as a transparency reform is, in reality, a structural change in how information moves, is interpreted, and ultimately acted upon. This article examines why access alone is not enough, and why the next frontier of healthcare governance lies in managing how meaning is constructed between data and decision.

Constructive Scepticism as a Governance Control Function: Why boards must treat scepticism as a system requirement — not a personality trait

Constructive scepticism is widely described as a quality directors should bring to the boardroom. This paper reframes it as something more fundamental. It argues that scepticism is not simply a mindset, but a governance control function shaped by how information flows, how decisions are structured, and how oversight is exercised. When these conditions weaken, scepticism does not disappear — it becomes ineffective. Understanding this shift is critical to explaining why boards can remain compliant while gradually losing control under pressure.

When Work Never Settles: A Governance Blind Spot Hiding in Plain Sight

Work does not feel endless because of the hours. It feels endless because it never settles.

In this paper, the Institute for Systems Integrity examines how modern work systems — defined by constant interruption, fragmented attention, and blurred boundaries — are not just productivity challenges, but governance risks. When work cannot stabilise, judgment compresses, visibility weakens, and decision quality degrades. This is not a failure of individuals. It is a failure of system design. This paper reframes the issue through a governance lens, outlining how organisations can move from interruption-driven activity to systems that protect thinking, preserve judgment, and enable sustainable performance.

Shock-Resilient Entrepreneurship: A Systems Integrity Playbook for Small Business in an Era of Global Disruption

In an era defined by geopolitical instability, energy volatility, and cascading economic shocks, small businesses are increasingly operating on the edge of uncertainty. Shock-Resilient Entrepreneurship reframes crisis not as an isolated event, but as a systemic stress test—one that exposes hidden dependencies, weak signals, and fragile decision structures. This ISI playbook brings together evidence, strategy, and systems thinking to help entrepreneurs move beyond reactive survival, and instead build organisations that can absorb disruption, adapt with clarity, and sustain performance under pressure.

AI Managers vs People Managers: Governance Lessons from Human and Machine Failure Modes

As artificial intelligence shifts from experimentation into operational reality, organisations are confronting a new governance challenge: they are no longer managing only people, but also autonomous systems with fundamentally different behaviours and risks. This article examines why managing humans and managing AI require distinct control systems—and what boards must now oversee to ensure safety, reliability, and accountability.

Tone at the Top, Drift in the System: Why ethical drift begins when leadership signals are inconsistent, tolerated, or ignored

Most organisations don’t fail because of a single unethical decision—they drift. Tone at the Top, Drift in the System examines how culture is shaped not by stated values, but by the signals leaders send through what they reward, ignore, and tolerate. Drawing on governance research and real-world patterns, this article explores how small inconsistencies accumulate into systemic risk, and why boards must look beyond frameworks to the behaviours that are quietly allowed to continue.

🏛️ When AI Writes the Discharge Summary: A Governance, Duty, and Systems Integrity Challenge

As generative AI tools move rapidly into clinical workflows, their use in discharge summaries and medication instructions is often framed as a productivity gain. Emerging evidence, however, suggests a more complex reality. While AI can produce outputs that are complete, fluent, and consistent, safety-critical risks — including hallucinations, incorrect instructions, and uneven performance across patient groups — persist. This article reframes AI-generated discharge communication not as a documentation tool, but as a governance and systems integrity challenge requiring board-level oversight, clear accountability, and robust control design.

Adding Value Through Ethical Leadership: Why Board Behaviour Shapes System Integrity

Ethics is often discussed in governance as culture, values, or compliance. But within complex organisations, ethical leadership functions as something more structural. Board behaviour shapes decision environments, influences how risks are surfaced, and determines whether integrity is reinforced or gradually eroded. In this article, the Institute for Systems Integrity examines why ethical discipline at the board level is not symbolic governance, but a core mechanism through which systems remain credible, resilient, and effective.

Carewashing: When “We Care” Becomes Organisational Self-Deception

Organisations increasingly speak the language of employee wellbeing. Leadership messaging emphasises that people matter, while wellbeing initiatives, resilience programs, and support services become more visible across workplaces. Yet many employees continue to experience chronic workload pressure, poorly managed organisational change, and inconsistent decision-making. This growing gap between organisational messaging and the lived experience of work is increasingly described as carewashing. This article examines how organisational expressions of care can unintentionally mask structural drivers of psychosocial risk and explores why genuine organisational care ultimately depends not on rhetoric, but on the design of work and the systems that protect people within it.

Water Governance in Healthcare Systems: A Planetary Boundary and Supply Chain Risk Analysis

Freshwater systems are a critical foundation of planetary stability, yet water governance rarely features in healthcare sustainability strategies. Healthcare depends heavily on reliable water for clinical care, sanitation, pharmaceuticals, and infrastructure, while global supply chains embed additional water risks. As climate change and over-extraction place increasing pressure on freshwater resources, healthcare systems face growing operational and systemic vulnerabilities. This paper examines water governance through the lens of planetary boundaries and supply chain risk, arguing that resilient health systems require stronger integration of freshwater risk into institutional governance.

AI as a Systems Stress Test

Artificial intelligence is often discussed as a breakthrough technology capable of transforming healthcare and complex organisations. Yet as AI moves from experimentation to real-world deployment, a different reality is emerging. Rather than simply improving performance, AI frequently exposes deeper weaknesses in the systems it enters. In this paper, the Institute for Systems Integrity (ISI) examines why artificial intelligence often functions as a systems stress test—revealing hidden fragilities in data ecosystems, operational workflows, governance structures, and organisational readiness.

Low-Recoverability Plastics and the Governance Logic of Targeted Bans

Low-recoverability plastics represent a structural governance challenge rather than simply a waste-management issue. When products are designed with near-zero probability of recovery and high environmental leakage, downstream solutions such as recycling and clean-up become economically and operationally inadequate. In these cases, the core failure lies in product architecture and incentive design. Targeted bans, therefore, function not as symbolic environmental actions but as upstream governance instruments — correcting persistent design failures and preventing predictable harm before it enters circulation. From a systems integrity perspective, such measures reflect a transition from managing waste to managing risk at the level of design.

Diversity as an Integrity Mechanism in Board Decision Systems

Diversity is commonly framed as representation. In complex governance environments shaped by AI disruption, sustainability transition, and systemic risk, it performs a far more critical function. In Diversity as an Integrity Mechanism in Board Decision Systems, ISI reframes diversity as a structural stabiliser within board architecture — strengthening weak-signal detection, ethical contestability, and decision resilience under stress. Perspective breadth is not symbolic. It is protective.

Bed Block as a System Integrity Failure - Flow Breakdown at the Acute–Rehabilitation Boundary

Hospitals described as “full” are often signalling a deeper structural issue. This paper examines bed block not as a simple shortage of beds, but as a breakdown of flow integrity at the acute–rehabilitation boundary. Drawing on systems integrity frameworks and published health system evidence, it explores how capacity constraints, funding design, and fragmented accountability can combine under sustained stress to produce predictable congestion — even in well-intentioned systems.

Circularity Under Clinical Constraints: Why recycled material claims do not guarantee circular outcomes in healthcare

Healthcare increasingly adopts recycled-content materials in the name of sustainability. But recycled inputs do not guarantee circular outcomes. Clinical safety, contamination risks, regulation, and waste pathways often reshape what is truly recoverable. This paper examines the gap between circularity claims and system-level realities.

Digital Transition Risk: Why Non-Tech Boards Inherit Tech-Grade Exposure

Digital transformation is often framed as an operational or technological upgrade. This paper examines a less discussed reality: how digital dependency fundamentally reshapes enterprise risk. As organisations adopt cloud systems, electronic records, vendor-managed infrastructure, and AI-enabled tools, boards inherit technology-grade exposure irrespective of industry classification.

Mentoring as Infrastructure: Learning, Power, and Risk in Organisational Design

Mentoring is widely treated as goodwill.

In practice, it behaves like infrastructure.

When designed well, it accelerates learning and strengthens judgment. When left to intention alone, it can narrow thinking, create dependency, obscure power, and amplify risk. This paper reframes mentoring as a learning control system, outlining benefits, predictable failure modes, and the safeguards required to protect judgment, independence, and decision quality.

The Residual Risk Budget: Why “Net Zero” Still Requires Governance

Net zero is often described as a destination — emissions reduced, offsets applied, balance achieved. But this framing can obscure a critical governance reality. Even under credible net-zero pathways, residual emissions, residual harms, and residual uncertainties remain. They do not disappear; they are redistributed across systems, stakeholders, and time. This paper introduces the concept of the Residual Risk Budget — the remaining exposure that must be made visible, owned, and adaptively governed. Without this discipline, net zero risks become an accounting construct that masks ethical trade-offs and accelerates integrity drift.

Beyond Legality: Why Boards Must Ask “Should We?”

In contemporary governance, legality is often treated as the primary decision threshold. Yet many organisational failures arise not from illegal actions, but from decisions that were lawful, compliant, and ultimately indefensible. This ISI paper examines the critical distinction between “Can we?” and “Should we?”, arguing that resilient boards must govern beyond permission alone and embed integrity as a core decision discipline.

Governing Wicked Problems in Healthcare: An Integrity Architecture for AI, Sustainability, and Net Zero

Healthcare systems are entering a period of unprecedented complexity. Artificial intelligence, sustainability pressures, and net zero commitments are converging within institutions not originally designed to absorb this pace and scale of change. This paper argues that these challenges are best understood not as technical or compliance problems, but as wicked problems requiring a fundamentally different governance response.

Beyond AI Compliance: Designing Integrity at Scale

This paper examines why most AI failures do not begin with flawed technology, but with governance systems that prioritise reassurance over judgment. As AI accelerates decision-making across complex organisations, traditional compliance frameworks struggle to detect drift, surface doubt, or correct harm before it becomes visible. This paper sets out a systems-level approach to AI governance—one that treats integrity as an architectural property, designed deliberately into authority, accountability, and the capacity to pause under pressure.

Governing AI in Healthcare: A Practical Integrity Architecture

This paper sets out why AI governance most often fails after deployment, not at approval. In real clinical environments, performance, safety, and accountability are shaped by workflow, staffing, training, and local judgment—not the model alone. This paper presents a practical integrity architecture for healthcare AI: designed to detect drift, preserve clinical judgment, and enable correction under operational pressure, before harm becomes visible to patients or boards.

The Systems Integrity Toolkit — Phase I

Why most integrity failures are not visible in time — and how systems allow harm to accumulate before anyone intervenes

Foundation Toolkit #1

This paper introduces the Systems Integrity Toolkit — Phase I, a governance architecture that consolidates ISI’s foundational research into a practical framework for identifying integrity risk before outcomes harden, showing how system stress, decision degradation, governance mediation, and failure dynamics interact long before harm becomes visible.

Most systems don’t fail because they can’t see the problem.

They fail because they can’t change the things they’ve learned to protect.

As a companion to the Systems Integrity Toolkit — Phase I, this paper examines why integrity risks persist even after they become visible. It explores systemic refusal — the quiet protection of certain variables from change — and shows how governance under pressure can stabilise harm rather than correct it. Together, the Toolkit and this analysis describe a familiar condition in complex organisations: clarity without permission to change.

The Failure Taxonomy: How Harm Emerges Without Malice - Why most disasters are not caused by bad people — but by predictable system drift

Foundation Article #4

This paper introduces the Failure Taxonomy — a structural model showing how harm accumulates in complex systems through drift, signal loss, and accountability inversion, without anyone intending it.

The ISI Pause Principle explains why governance fails when reaction replaces reflection. Under pressure, systems that remove space between signal and response degrade judgment, suppress warning signs, and invert accountability. Pause is not a leadership trait — it is a governance control condition.

Integrity is a System Property. Why outcomes reflect design, not intent

Foundation Article # 3

Integrity is often treated as a personal trait. This paper shows why it is better understood as a system property — shaped by how authority, accountability, and information are aligned under stress, and why outcomes reflect design rather than intent.

When the Constitution Becomes a Weapon

How governance drift turns compliance into a liability under system stress

This paper examines how constitutions, delegations, and oversight structures can remain legally intact while drifting out of alignment with real decision-making, allowing compliance to persist even as governance control erodes.

Why Oversight Fails Under Pressure

How system stress distorts visibility, weakens governance, and produces predictable outcomes

Foundation Article # 3

Governance mechanisms designed for stable conditions often lose sensitivity under sustained stress.

Signals distort. Drift normalises. Oversight becomes selectively blind.

This paper examines why failures emerge quietly — and why outcomes are best understood as properties of system design, not individual intent.

When Resilience Appears, Governance Has Already Failed. Why frontline heroics are a warning signal — not a success story

A companion paper to Why Oversight Fails Under Pressure examining how human resilience conceals system failure.

Themes

Foundation Papers

Browse by theme

In addition to our foundational work, this section includes

Essays and perspective pieces

Governance and leadership reflections

Policy-relevant analysis

Research-informed commentary

Invited contributions from practitioners and scholars

Current publications appear below and will be updated as new work is released.