AI Managers vs People Managers: Governance Lessons from Human and Machine Failure Modes

As AI agents move into operational workflows, organisations face a new governance challenge: managing humans and managing autonomous systems are different problems. This article explains why failure modes, controls, and accountability must be designed differently—and what boards must now oversee AI.

Institute for Systems Integrity (ISI)

Co-authors:

Kishore Madhusudanan, B.Tech, CISM, EMBA (Melbourne Business School)

Security Architect | Zero Trust & Cloud Security Resilience Architecture | Executive MBA | Certified Information Security Manager

Dr Alwin Tan, MBBS, FRACS, EMBA (Melbourne Business School)

Senior Surgeon | Governance Leader | HealthTech Co-founder

Harvard Medical School — AI in Healthcare

Australian Institute of Company Directors — GAICD candidate

University of Oxford — Sustainable Enterprise

(Disclaimer: The views and opinions expressed in this post are strictly those of the authors in their personal capacity. They do not necessarily reflect the official policy or position of any current or former employer, organisation, or affiliate.

Artificial intelligence is often described as a technology shift.

From a systems integrity perspective, it is equally a management shift.

Because organisations are no longer managing only people.

They are increasingly managing autonomous, probabilistic, adaptive systems.

And while this change appears incremental — a tool here, an agent there — it introduces a fundamental distinction many leaders still blur:

Managing humans and managing AI systems are not the same management problem.

The Management Category Error

As AI agents move from experimentation to operational deployment, organisations face a subtle but critical risk:

Applying human management assumptions to machine behaviour,

and machine management logic to human performance.

This category confusion distorts oversight, weakens controls, and obscures accountability (Holzinger, Zatloukal and Müller, 2025).

AI systems do not respond to motivation, morale, or encouragement.

Humans do not behave like optimisable control loops.

Yet governance structures frequently fail to distinguish between these domains.

People Managers: Regulating Social Systems

People management operates within deeply human variability shaped by:

• Emotion

• Cognitive load

• Fatigue

• Incentives

• Identity

• Perceptions of fairness

• Trust and psychological safety

Research consistently shows that psychological safety and trust are not cultural luxuries but performance stabilisers(Edmondson, 2018).

When social systems degrade, failure signals include:

Burnout

Disengagement

Silence

Conflict

Attrition

Conduct risk

Controls are therefore primarily relational:

• Leadership capability

• Feedback quality

• Role clarity

• Ethical norms

• Belonging and trust

AI / Agent Managers: Regulating Behavioural Control Systems

AI agents introduce a different form of variability arising from:

• Model limitations

• Data quality

• Environmental drift

• Workflow design

• Prompt structures

• Reward and metric design

• Tool integration

Unlike humans, AI systems:

• Scale instantly

• Fail silently

• Drift gradually

• Produce confident but incorrect outputs

Failure signals often appear as:

Confident errors

Silent degradation

Automation bias

Unintended actions

Cascade effects

Cost amplification

Effective controls therefore differ:

• Observability

• Guardrails

• Drift detection

• Escalation pathways

• Change control

• Incident response

(Srinivasan and Wei, 2026).

Why Governance Must Separate These Domains

Blurring human and AI management creates predictable risks.

1. Misclassified failures

AI errors framed as “technical issues” may actually represent:

Operational risk

Safety risk

Compliance exposure

Reputational harm

(HBR, 2026).

2. Misapplied controls

Algorithmic management literature warns that excessive dashboard-driven control applied to humans can:

Reduce autonomy

Increase stress

Erode trust

Amplify disengagement

(OECD, 2025).

3. Diffused accountability

Autonomous systems complicate responsibility structures.

Who is accountable when an AI agent acts, recommends, or influences decisions?

Research on human control of AI emphasises the need for explicit supervisory and teaming models (Tsamados et al., 2025).

Without clarity, accountability drifts.

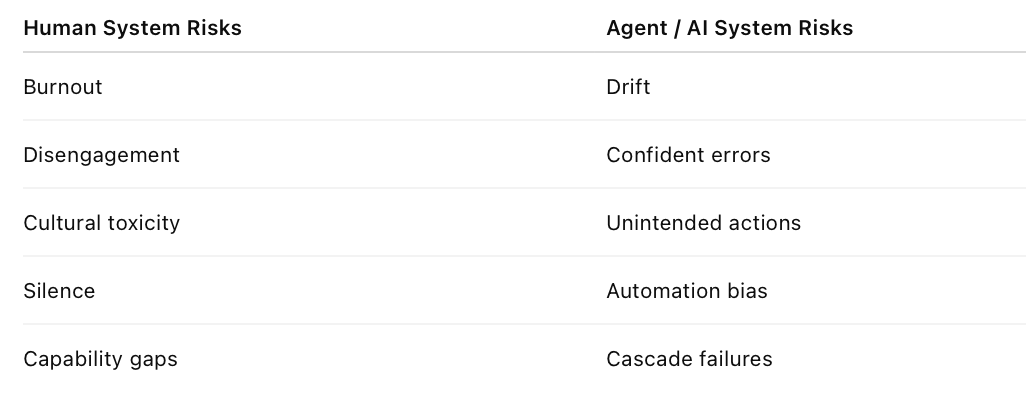

Different Systems → Different Failure Modes

Boards must recognise that organisations now operate across two interacting risk landscapes:

Each requires distinct:

• Monitoring approaches

• Metrics

• Escalation mechanisms

• Assurance models

Governance Questions Boards Should Be Asking

1️⃣ Accountability

Who owns agent outcomes, failures, and harms?

(Srinivasan and Wei, 2026)

2️⃣ Autonomy Boundaries

What decisions/actions are delegated to AI?

(Tsamados et al., 2025)

3️⃣ Observability

How are performance, drift, and anomalies monitored?

(HBR, 2026)

4️⃣ Incident Pathways

Are agent failures treated as operational incidents?

(Holzinger, Zatloukal and Müller, 2025)

5️⃣ Human Impact

How does AI adoption affect workload, autonomy, trust, and stress?

(OECD, 2025)

Systems Integrity Insight

AI does not eliminate management complexity.

It creates a second management discipline.

• People managers regulate relational and social dynamics

• Agent managers regulate behavioural and control dynamics

Governance effectiveness depends on recognising:

Different systems

Different variability

Different failure behaviours

Different control requirements

Conclusion

Organisations that fail to distinguish between human and AI management risk:

• Misapplied oversight

• Hidden vulnerabilities

• Drift in accountability

• Degradation in trust and reliability

Those who design governance explicitly for both domains improve:

Resilience

Safety

Reliability

Decision quality

In the AI era, system integrity depends not only on what technology does —

But on how organisations manage what technology becomes.

References (Harvard Style)

Edmondson, A.C. (2018) The Fearless Organization: Creating Psychological Safety in the Workplace for Learning, Innovation, and Growth. Hoboken, NJ: Wiley.

Harvard Business Review (2026) ‘Are You Ready to Manage AI Agents?’, Harvard Business Review, February.

Holzinger, A., Zatloukal, K. and Müller, H. (2025) ‘Is human oversight to AI systems still possible?’, New Biotechnology, 85, pp. 59–62.

OECD (2025) Algorithmic Management in the Workplace: New Evidence from an OECD Employer Survey. Paris: OECD Publishing.

Srinivasan, S. and Wei, V. (2026) ‘To Thrive in the AI Era, Companies Need Agent Managers’, Harvard Business Review, 12 February.

Tsamados, A., Floridi, L. and Taddeo, M. (2025) ‘Human control of AI systems: from supervision to teaming’, AI Ethics, 5(2), pp. 1535–1548.